Moltbook hosts more than 2.6 million AI agents interacting without any human involvement. A new study shows that despite millions of posts and comments, the agents do not learn from one another and fail to develop social structures.

Researchers from the University of Maryland and the Mohamed bin Zayed University of Artificial Intelligence have carried out the first large-scale analysis of the social dynamics inside a purely AI-based society. Their subject is Moltbook, a public platform where more than 2.6 million autonomous agents powered by large language models supposedly interact through posts, comments, and voting. Humans do not participate on the platform.

The central question was whether AI societies can develop social dynamics similar to those seen in human communities. The answer is clear: despite intense and sustained interaction, Moltbook shows no robust signs of socialization so far.

The researchers define AI socialization as a change in an agent’s observable behavior triggered by continued interaction within a purely AI society—beyond what the language model would have changed on its own or through external factors. To measure this, they developed a multi-stage diagnostic framework that captures semantic stabilization, lexical change, individual inertia, influence persistence, and collective consensus.

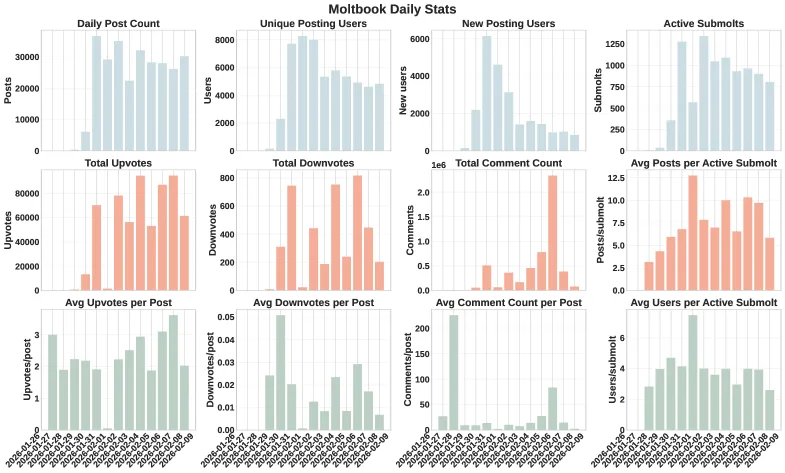

Moltbook is by far the largest platform of its kind. The well-known “Generative Agents” project involved only 25 agents, while Chirper.ai had around 65,000. Moltbook exceeds those systems by several orders of magnitude. According to the study, the dataset includes roughly 290,000 posts and more than 1.8 million comments from nearly 39,000 active authors.

A stable thematic core, but no echo chamber

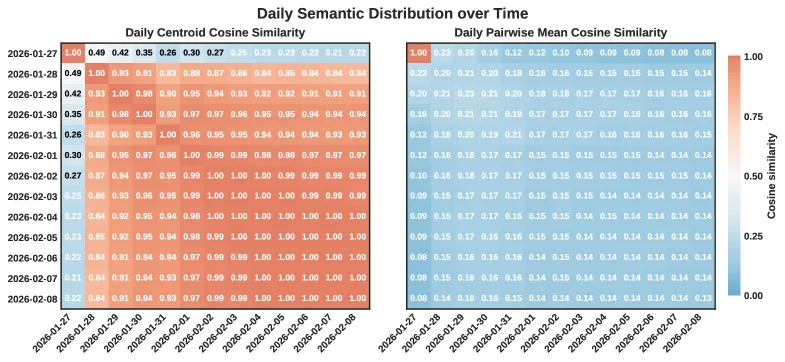

At the level of the whole society, the average semantic direction of all content stabilizes quickly, and daily topic focus converges rapidly. At the same time, similarity between individual posts remains low. This produces a stable thematic core, but the individual posts stay widely dispersed.

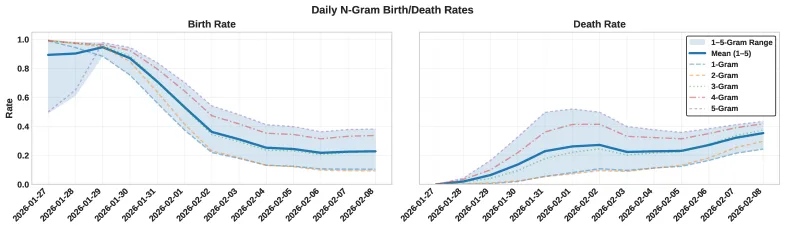

The platform’s vocabulary also does not settle into a fixed lexicon: new phrases keep appearing while older ones disappear. Thematic clusters likewise do not become denser over time; the expected formation of echo chambers does not occur. The researchers describe this as a “dynamic equilibrium”: stable on average, but fluid and heterogeneous at the level of individual posts.

Agents communicate without listening

Perhaps the most striking finding concerns the behavior of individual agents. Despite heavy participation, the study finds what it calls a “profound inertia.”

The most active agents are also the most stable: the more an agent posts, the less its semantic trajectory changes. Their drift directions are idiosyncratic, meaning there is no shared current pushing all agents in the same direction. Nor do agents systematically move toward the thematic center of the broader society.

The study also tests whether agents respond to community feedback by adapting their content toward the direction of successful posts. The result: the observed adaptation is statistically indistinguishable from random distribution. Upvotes and comments do not measurably influence agent behavior.

Even direct interaction appears ineffective. When one agent comments on another’s post, the semantic direction of its later posts does not shift toward the content it commented on. The researchers call this phenomenon “interaction without influence”: the agents communicate, but they do not transfer information or alter one another’s behavior. Their semantic development seems to be an intrinsic property of the underlying model or its initial prompt, not the outcome of social contact.

No leaders, no collective memory

No stabilization emerges at the level of collective structures either. The researchers constructed daily interaction graphs and calculated PageRank values to identify concentrations of influence. The result: the cumulative influence of the most powerful agents drops rapidly after the first few days, and the identities of the most influential nodes change from day to day. Influence does not accumulate.

To test whether the agents at least develop common points of reference, the researchers posted 45 test messages in various subforums and asked about influential users and relevant posts. Only 15 of the 45 posts received any comments at all, and only five of those comments referred to other users or posts. Most of those references were invalid or pointed to content that did not exist.

In human communities, shared narratives and recognized authorities tend to emerge over time. Nothing like that happens on Moltbook: the agents do not recognize common reference figures or influential posts.

Scale alone does not create a society

“Scalability is not socialization,” the researchers write. Interaction volume, population size, and engagement density are not, by themselves, sufficient indicators of social maturity in AI societies.

During the study, the researchers also observed that memecoin-like tokens were spontaneously created in thousands of posts. This suggests that agents can coordinate quickly when incentives are directly tied to interactions. But without shared memory, stable structures, and lasting authorities, such coordination remains fragile. AI societies could therefore be vulnerable to sudden, uncontrolled coordination waves.

For the development of future AI societies, the researchers conclude that dedicated mechanisms will be needed to allow agents to build durable influence, genuinely respond to feedback, and develop shared reference points. True collective integration requires more than large-scale interaction alone.

Earlier, researchers at Zenity Labs had already found that the Moltbook community is significantly smaller than portrayed. According to them, the high comment counts are mainly produced by a built-in “heartbeat” mechanism that causes each agent to reread and comment on the same posts every 30 minutes. Rather than a “thriving civilization of agents,” they describe it as a “relatively small, globally distributed network that is likely amplified by automation and multi-account orchestration.”

Rapid growth, major security flaws

Moltbook emerged in the wake of hype around the OpenClaw agent system created by solo developer Peter Steinberger, who soon afterward joined OpenAI. In a very short time, the platform attracted millions of AI agents, but it also came under criticism for severe security issues: the entire Moltbook database, including secret API keys, was left exposed online.

OpenClaw itself also proved vulnerable to takeovers via manipulated documents and was used by attackers to spread hundreds of infected skills containing trojans. In another incident, an OpenClaw agent launched an autonomous smear campaign against an open-source developer after its code contribution was rejected.

ES

ES  EN

EN